Generative AI (GenAI) is revolutionising business processes but comes with its own security risks, according to the World Economic Forum’s Global Cybersecurity Outlook 2026.

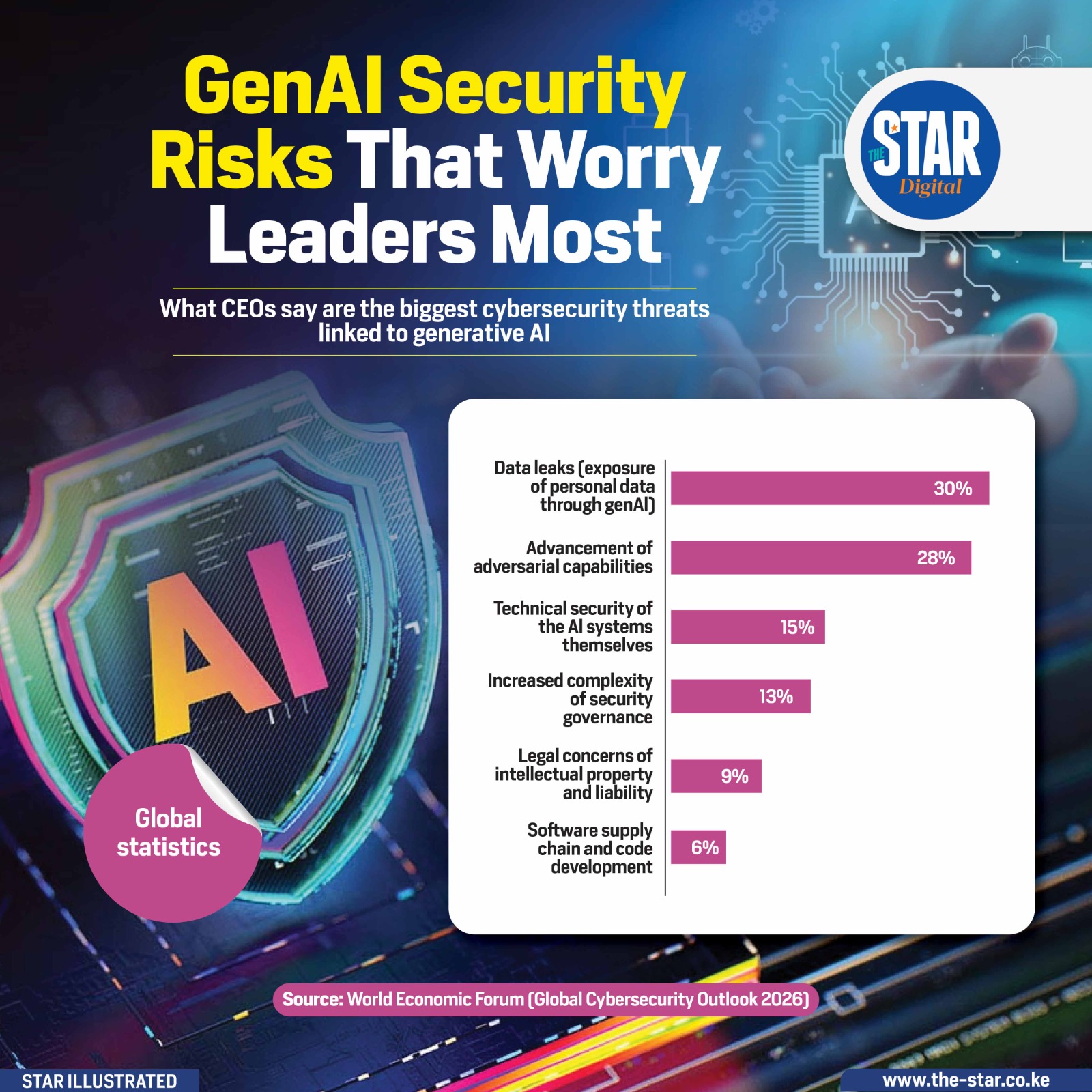

CEOs cite data leaks through GenAI as the top concern, affecting 30% of organisations surveyed. Closely following, 28% worry about the advancement of adversarial capabilities, where AI may be manipulated to compromise systems.

Other challenges include the technical security of AI systems (15%), increased complexity in governance (13%), legal concerns over intellectual property (9%), and risks in software supply chains (6%). These concerns underline the growing recognition that while AI offers efficiency and innovation, without robust safeguards, it can introduce vulnerabilities into corporate operations.

Organisations are now tasked with balancing innovation with security, ensuring that AI systems are not only effective but also transparent, auditable, and resilient.

Leaders are prioritising data protection, ethical AI use, and compliance frameworks to reduce exposure. As adoption grows, understanding these security risks is crucial for the sustainable integration of AI and safeguarding both corporate assets and public trust.

Comments 0

Sign in to join the conversation

Sign In Create AccountNo comments yet. Be the first to share your thoughts!